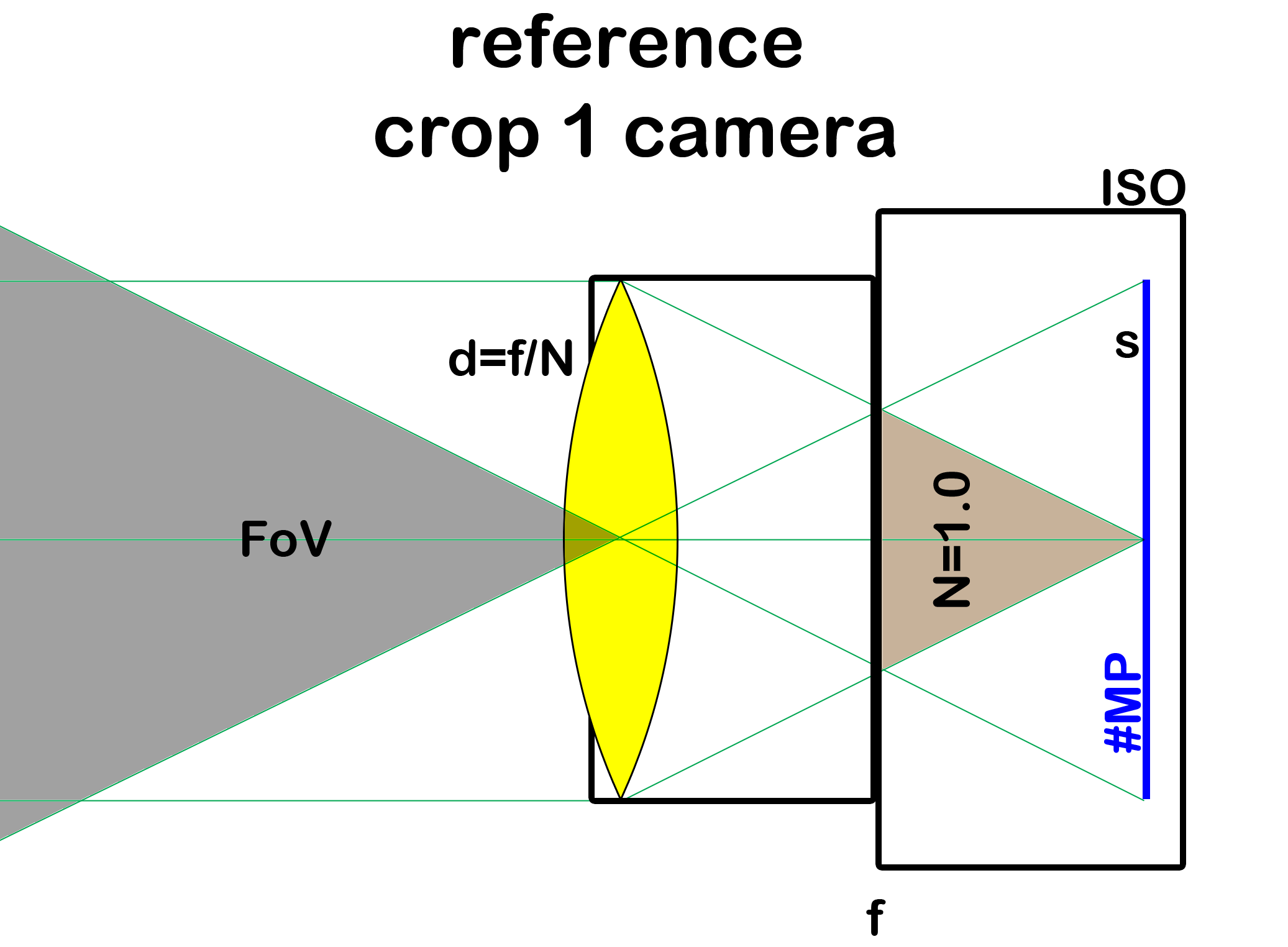

Various parameters, or variables of a real camera or a reference camera are depicted above

In preparation of an article discussing the advantages and disadvantages of various sensor sizes for a given camera performance, I try to set a common ground for such discussions.

I have prepared a white paper which dives much deeper into the topic than is possible in this short blog article. You may find it here:

The short version is this: An image contains no information whatsoever about the size of the sensor within the camera which was used to capture it. None. Nothing. Nada. (except EXIF of course ;) ) The proof is beyond the scope of this blog article and the article only gives some clues. But this is a fact, trust me.

Therefore, all cameras which could have captured a given image create a so-called equivalence class: they are all equivalent, producing indistinguishable images. And they have different sized sensors! By camera, I mean a camera with all the parameters defined it used to capture an image, such as the variables shown in the title image. Changing any variable "creates" a different camera. The exposure time used to capture an image is defined implicitely too: the one giving correct exposure (and it is a constant of course for indistinguishable images).

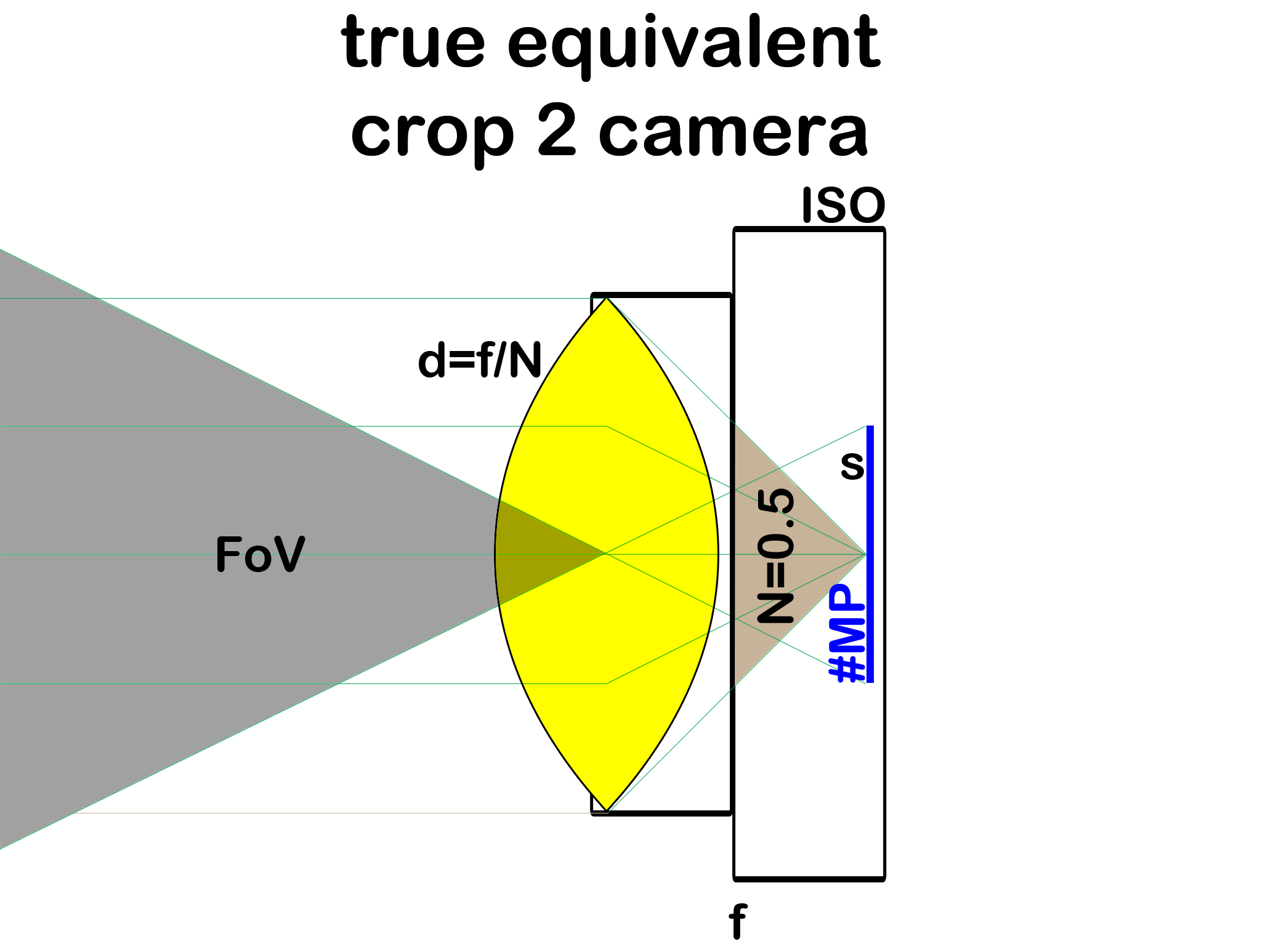

The following image shows an equivalent camera where the sensor has only half the size of the first or reference camera, i.e., an equivalent crop-2 camera:

The camera's lens has the same absolute diameter but it's focal length is shorter to maintain a common field of view. The equivalent crop-2 camera has a different F-stop and ISO sensitivity.

Main claim:

Any discussion about the impact of varying sensor sizes must be based on cameras made equivalent first. Otherwise, any comparison will just reveal the inequivalence of parameters the respective cameras have been set to and nothing else. And such a result would be trivial, known and not worth a further discussion.

Such trivial results are that a larger sensor produces a more shallow depth of field or less image noise. This is not true! Because it just means that the cameras were used with non-equivalent settings, e.g., with lenses of different diameter d which means with lenses of different weight and cost. Another example are ISO comparisons between cameras with different sized sensors but ISO kept the same. Such comparisons are pointless! Instead, compare a FourThirds camera at ISO 100 with a full frame camera at ISO 400 because only then they are equivalent. Not doing so just compares the size of lenses which a ruler can do just as well.

Secondary claim:

Once equivalent cameras are compared, results start to become interesting. Because now any deviation is due to deviations with respect to an ideal camera. Such like a lens with aberrations, production or design tolerances or compromises in a CMOS production process. The white paper explains that such deviations are generally expected to be larger with smaller sized sensors. Of course, one such deviation is obvious: when an equivalent camera doesn't exist for a sensor size, e.g., because an f/0.1 aperture is unfeasible.

I will follow up this article with a more complete article of the impact of sensor size on image quality.

Stay tuned and enjoy your read :)

Some much-needed clarity. Will digest.

ReplyDeleteHello Falk,

ReplyDeletethanks for setting up the equiv. classes which support the 'thinking about sensor sizes' a lot.

I have questions though:

1) What kind of ISO definition do you apply?

2) In the type 1 equiv. class each pixel of the crop camera receives the same photons as the 'reference pixel' (ideally). Then the same amplification is needed for the corresponding image pixel (f.ex. in sRGB) for the reference and crop cams - right? You claim wrong, because of different ISOs?

Kerusker

Hi Kerusker,

Deletethanks for stopping by. ISO is a norm for sensitivity, refer to http://www.dxomark.com/index.php/About/In-depth-measurements/Measurements/ISO-sensitivity for a good read about it. In the first place, it has nothing to do with read out amplification factors. Sensors with constant read out noise and high bit ADCs (like the new Sony sensors with column-parallel ADCs) actually need no amplification at all as amplification is only used to reduce read out noise and quantisation noise (actually, they use amplification up to a certain level, like ISO 1600, but not beyond).

Ad 2: Again, you confuse amplification and sensitivity (ISO). An easy way to see it is this: Consider two sensors of equal size but with different #pixels. Then your argument would led to the (false) conclusion that the sensors should use different ISO settings.

The fact that (in a simplified model) type-1 equivalent cameras would have pixels indeed using the same amplification despite their different ISO settings is actually a nice way to understand how camera equivalence works and why the ISO setting isn't a constant in an equivalent comparison.

Nice article and sharing. Appreciate your effort. Keep up the good work.

ReplyDeleteCan you please apply your analysis to the LX7 vs RX100 debate currently raging across the internet? The LX7 has a faster lens, while the RX100 has a larger sensor and more pixels. According to your analysis, which camera has the better specifications?

ReplyDeleteP.S. Sorry to post Anonymously, my nickname is noirist.

DeleteNo problem.

DeleteThe cameras have different aspect ratios and I'll compare by image diagonal rather than square root of surface (both methods provide very similiar results though).

The LX7 has a crop factor of 5.1 (using both the focal length in specs and on the lens), the RX100 of 2.7. So, the 25mm-equivalent specifications read as follows:

LX7: 24-90mm F/7.1-11.7 ISO 2100+

RX100: 28-100mm F/4.9-13.2 ISO 730+

So, the RX100 is faster at the wide end (but not as wide) by about 1 stop but this advantage disappears at the long end where both cameras have the same aperture of 7.7mm. However, the RX100 should have much better dynamic range due to the lower equivalent base ISO and Sony's column-parallel ADC technology.

Overall, I would give the RX100 an advantage of 1 stop over the LX7 but it looses 4mm at the wide end.

You miss a few important points. At equivalent settings, FF delivers more resolution and this advantage is much greater when you open wide enough. That resolution advantage can be the difference between unacceptably soft images (f/1.2 lenses on crop bodies) to quite sharp ones (f/2.0 or so on FF). This is not due to "imperfect lenses", it follows from the fact that youcan scale down everything but not the index of refraction of the glass used - you need to refract the light much more with crop sensors, leading to poor resolution.

ReplyDeleteAlso, very often, you can shoot with FF at slower speed to get cleaneer ISO 100 images (eq. to ISO 40 or so on crop).

Thanks for the reply. However, I do not miss "a few important points". I actually treat it in fairly great detail.

DeleteYou are wrong in assuming that the finite index of refraction of glass prevents perfect lenses (perfect in a sense that they outresolve the sensor) -- it just makes them more expensive which is why I say that every resolution requirement has its own sweet spot for sensor size. E.g., Zeiss just showed 3000$ 55mm f/1.4 lenses which fully resolve a D800 in the corners wide open (I've seen it myself). So, we talk about imperfect lenses indeed. My equivalence statement is 100% accurate for a perfect camera/lens.

Hello Falk,

ReplyDeleteNice article. I think you reach the same conclusions about equivalent images that I published in luminous-landscape.com as an essay in 2007. My article was titled, "Why Is My 50mm Lens Equivalent to 80mm on a 35mm Camera

and Why Is There More Depth-of-Field?" That title was made up by Michael Reichmann. I used the title, "What is an Equivalent Image," in my book where it was chapter 11. If you haven't seen my book, it has the title, "Science for the Curious Photographer" and is widely available. I discussed all the scaling required including the ISO to maintain the shutter speed and the necessity of holding the aperture constant. However, I did not consider the pixel count.

Thanks for the comment. Your article is very interesting indeed. Of course, I do not claim that my article is new work. I just wanted to bring true equivalence to the attention of a broader audience.

DeleteI like your article (direct link: http://luminous-landscape.com/essays/Equivalent-Lenses.shtml ). I would actually add a point "6. Noise floor".

Wrt Luminous Landscape, there is a brand new article by Peter van den Hamer and endorsed by Eric R. Fossum, actually citing the work done on equivalent cameras: http://www.luminous-landscape.com/essays/dxomark_sensor_for_benchmarking_cameras2.shtml

Even DPReview is slowly adopting the notion of an "equivalent aperture" more recently. I think slowly we're achieving our goal to make people drop the F-stop as a notion which is useful across varying sensor size.

My additional goal was to provide an alternative notion to replace F-stop (for such comparisons) then: "The physical aperture diameter (in mm)".

Falk,

ReplyDeleteYour white paper seems like a rigorous treatment of the subject. I can tell just reading through it that it's probably better than a lot of other resources out there, and I really want to understand it.

As a beginner, though, I wish your example in section 3.2 had been better. As the end of section 2, you list all of the 8 variables involved in equivalence ("The exact equivalence relationship between the reference camera and crop camera' is as follows:").

A helpful example for beginners (and probably advanced users too) would show a theoretical equivalence example that lays out each and every one of those variables and what would be required for equivalence (some variables may not be real world numbers). It would also help to flesh out your "real world" example of m4/3 in section 3.2 to explicitly compare what is happening to all 8 of the variables, not just the few variables you listed.

Steller resource, it seems like a rigorous treatment. Pedagogically, I suspect many readers need some concrete numbers before circling back to reading section 2 to understand the nuances there. If you have some time to update your post, I think it would be a great addition.

I think my example is pretty complete. I compare 4 of the variables. 3 other variables are simply EQUAL (FoV, d, #MP type1) and the sensor size (s) is clear enough. No need to list the remaining variables in the example.

DeleteIt may be too difficult to understand the simple question behind all this: what cameras deliver indistinguishable results? Therefore that e.g., the field of view (FoV) remains a constant must be clear.

The single simple main result is that d (the aperture diameter in mm) is a constant across all equivalent cameras. This fact is not yet known to all photographers. The rest is just derivative work.

Falk,

ReplyDeleteHow does the color sensor type affect the comparison? For example, a Bayer color filter with n pixels has significantly less measured resolution than a Foveon sensor with n pixels. Same for a 3 sensor array with a color prism, where each of the three sensors measures a different range of light. The color sensor type also affects the sensitivity of the sensor since a Bayer filter is reducing the amount of light captured by the sensor.

Thank you,

Noirist

Hi Noirist,

Deleteyou address an interesting question, the question of efficiency of 3 different technologies to separate color. While this is certainly worth an extra article, it does not directly affect the reasoning about equivalance. The statements about equivalence implicitely assume that the underlying technology remains the same.

Just a little comment about the other two technologies: a Bayer sensor has the same resolution as a Foveon sensor in the luminosity channel and half the resolution in the color channels (where the human eye is less capable too). So, I don't consider Foveon sensors to have a significant resolution advantage. And unfortunately, the color prism approach does not scale to very high resolutions as color aberrations become too large. However, the approach of trichroic prism arrays to replace the Bayer array is a promising one.

Well I hope you write that extra article! From my limited understanding, I would expect that the sensor technology does not affect field of view or depth of field. But clearly it affects resolution and ISO. Looking forward to your next article, Noirist

DeleteUPDATE: 2014 July 7, DP Review has published an article about equivalence which marks an event: camera equivalence must now be considered common wisdom and should not be the topic of any heated internet discussion -> http://www.dpreview.com/articles/2666934640/what-is-equivalence-and-why-should-i-care (nevertheless, 2 days later, the article has 1000 comments ...).

ReplyDeleteIn the comment section, there is a discussion clarifying the origin of the concept. I'd like to refer to it for easier future reference. Here we go:

1. Early mention of many ideas, w/o coining the term: 2006, Jan 16, Pieter (pidera) -> http://www.dpreview.com/forums/post/16738985

2. Early mention of the term "equivalent": 2006, Dec 29, Joseph James (joe mama) -> http://www.dpreview.com/forums/post/21454287

3. In 2007, Jan 11, Daniel Buck tried to explain the Brenizer method (not invented by Ryan Brenizer who picked it up from him a year later). Equivalence would easily explain the effect (stitching a larger sensor) yet the internet discussion fails to know about, marking a point in time equivalence was not known to most photographers -> http://www.fredmiranda.com/forum/topic/544062/

4. The Equivalence Essay written by Joseph James was first published 2007, August 16:

-> http://www.dpreview.com/forums/post/24394461

-> http://www.josephjamesphotography.com/equivalence/index.htm

Therefore, this may indeed serve as root publication coining the term "Equivalence", following up on earlier forum discussions.

5. I don't know the date of establishment of the equivalence theoreme described in my article. The theoreme goes beyond the early articles in highlighting that it is physically impossible to determine sensor size from inspecting image data from an ideal (diffraction-limited) camera. The equivalence theoreme treats diffraction and lens aberrations too.

kind regards,

Falk

UPDATE: I may be a little late to the party, but I really highly recommend the video discussion of equivalence done by Tony Northrup, found here: http://youtu.be/DtDotqLx6nA .

ReplyDeleteEsp. if you have reservations towards the entire concept, it may really help you to widen your horizon enough to understand the wider landscape of the topic. And it is a lot of fun to watch too!